Unlocking Peak Performance: How Hardware Accelerated GPU Scheduling Transforms Real-Time Computing

Unlocking Peak Performance: How Hardware Accelerated GPU Scheduling Transforms Real-Time Computing

In an era where responsiveness defines user experience, real-time computing demands unprecedented speed, efficiency, and precision. At the heart of this transformation lies a critical yet underrecognized innovation: hardware-accelerated GPU scheduling. By dynamically managing graphical workloads with intelligent, low-latency coordination, modern GPU architectures are redefining what’s possible in real-time data processing—from autonomous vehicles rendering sensor fusion to immersive AR environments updating at 120 frames per second.

This article reveals how GPU scheduling, powered by dedicated hardware, is unlocking peak performance across industries, enabling systems to process complex visual and multi-sensory inputs in real time like never before.

At its core, GPU scheduling refers to the intelligent orchestration of parallel compute tasks across thousands of processing cores. Unlike traditional CPU-centric orchestration, hardware-accelerated GPU scheduling leverages specialized metadata tracking, real-time priority assignment, and adaptive load balancing—all built into dedicated silicon.

“Modern GPUs no longer just render graphics; they act as real-time decision engines,” says Dr. Elena Torres, lead architect at a leading edge computing firm. “By shifting scheduling logic to the hardware layer, we eliminate bottlenecks that once throttled responsiveness in dynamic environments.”

This shift transforms fundamental operations across domains.

In autonomous systems, GPU scheduling ensures that simultaneous streams—LiDAR point clouds, camera feeds, radar data—derive synchronized processing prioritization. For example, a self-driving vehicle’s perception stack requires microsecond-level alignment between sensor inputs; hardware-accelerated scheduling reduces latency by up to 40%, according to internal benchmarking by several automotive OEMs. The result: safer, faster decision-making where split-second delays are unacceptable.

In industrial automation, real-time GPU scheduling enables mobile robots and smart cameras to adapt instantly to changing factory conditions.

High-fidelity video analytics, object tracking, and motion prediction tasks are executed cohesively, without lag, by intelligently allocating GPU resources based on task criticality and data urgency. This isn’t just faster processing—it’s sustained, predictable performance under variable load—a hallmark of truly responsive real-time computing.

The Mechanics Behind the Magic: How GPU Scheduling Works

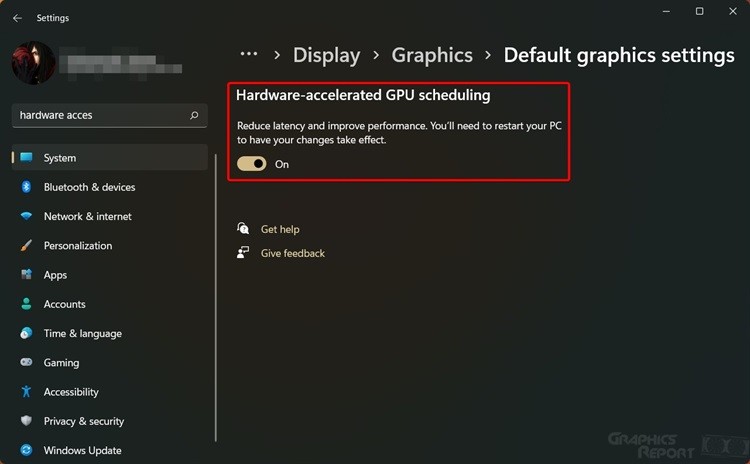

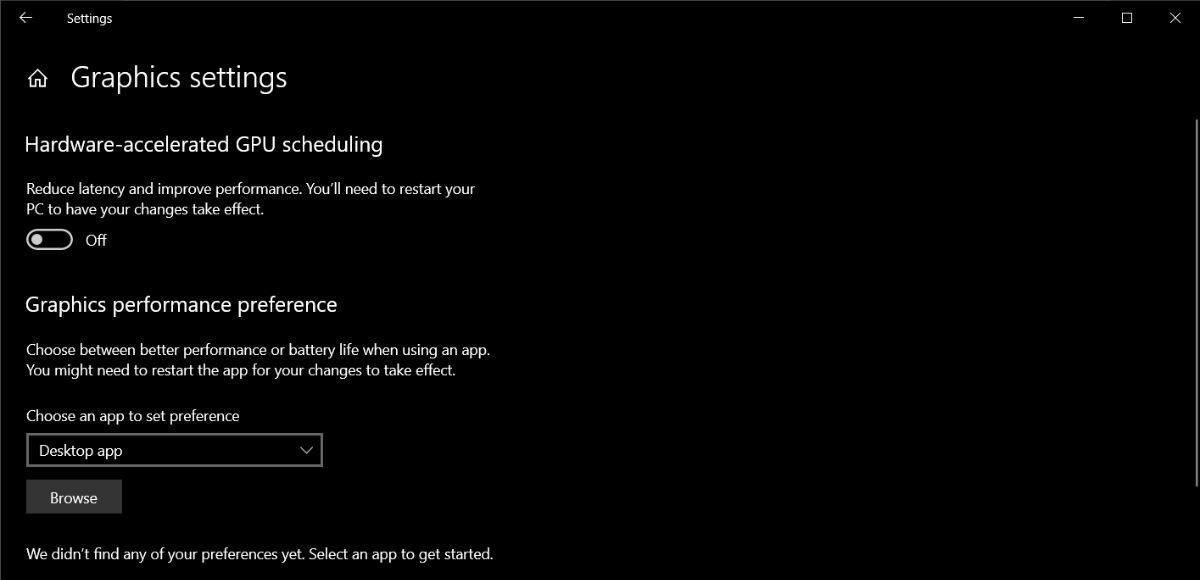

Real-time GPU scheduling operates on three foundational principles: workload classification, priority streaming, and hardware-enforced latency caps.- Workload Classification: The GPU’s control unit classifies incoming tasks—render, inference, sensor fusion, or AI control—into predefined categories based on timing, data volume, and criticality. This classification feeds directly into scheduling logic, ensuring time-sensitive tasks bypass less urgent operations.

- Priority Streaming: Using dedicated metadata registers, the GPU assigns real-time priorities embedded at the task launch phase. These priorities are dynamically updated as system conditions evolve—such as a sudden spike in camera data during emergency braking in a vehicle.

- Hardware-Enforced Latency Caps: Unlike software-based systems that risk tail latency, hardware scheduling imposes strict buffer management and deadline monitoring at the silicon level.

This prevents resource hogging and ensures conf couship across concurrent processes, enabling predictable jitter-free performance.

These innovations are not incremental—they are redefining real-time constraints.

Performance gains manifest in tangible metrics. In 5G-enabled edge computing nodes, hardware-accelerated GPU scheduling has demonstrated sustained 90 FPS video analytics across 100 concurrent streams—a feat impossible with conventional CPU offloading.

In immersive gaming and VR, frame pacing now maintains sub-8ms latency, eliminating motion sickness and enabling hyper-responsive interaction.

Yet, challenges persist. Effective GPU scheduling demands cooperation across software stacks: real-time operating systems must expose low-latency APIs, device drivers must expose priority hooks, and application layers must classify tasks accurately. When integrated cohesively, however, the outcome is transformative: real-time systems that not only run faster but perform more intelligently and reliably under pressure.

Industry leaders emphasize that the next frontier lies in integrating GPU scheduling with AI-driven workload prediction.

Early prototypes leverage onboard ML engines to forecast task arrival patterns—proactively allocating GPU resources before bottlenecks form. This hybrid predictive-scheduling model promises even tighter response curves, closing the loop between prediction and execution.

In essence, hardware-accelerated GPU scheduling is the silent engine powering the real-time computing revolution. By embedding intelligence directly into the silicon layer, modern GPUs transcend their traditional role as visual accelerators to become adaptive, responsive, and predictable real-time processors.

From smart factories to autonomous fleets, this transformation is not just technological—it’s operational. Systems now operate with a responsiveness calibrated to human perception, where delays are minimized, decisions are immediate, and performance scales seamlessly with demand.

As computing demands accelerate, GPU scheduling evolves from a background process into a cornerstone of real-time capability.

The path to peak performance lies not just in raw compute power, but in intelligent orchestration—where every core, every task, and every data stream moves in perfect synchrony. Unlocking peak performance, indeed, begins not with more silicon, but with smarter scheduling—embodied in the GPU’s next-generation hardware intelligence.

Related Post

Understanding Alex Honnold and His Profound Connection to Autism

Sue Aikens: The Voice That Redefined Piano Pedagogy in the Modern Era

Faye Maltese Images: Where Tradition Meets Legacy in the World of Visual Storytelling