Does It Use Live Data? How Modern Systems Power Real-Time Decision-Making

Does It Use Live Data? How Modern Systems Power Real-Time Decision-Making

In an era where milliseconds can determine business success or user satisfaction, the question of whether technology leverages live, up-to-the-minute data is no longer theoretical—it’s operational. From healthcare monitoring to financial trading, the ability to process real-time information distinguishes cutting-edge systems from outdated models. Powered by sophisticated algorithms and interconnected data streams, modern platforms don’t just react—they anticipate, adjust, and optimize with unprecedented precision.

This article explores how advanced systems utilize live data, the technologies enabling it, and why this capability has become a cornerstone of digital transformation.

At the core of live data utilization lies a network of sensors, APIs, and edge computing devices that continuously collect, transmit, and analyze information. These sources—ranging from environmental monitors and GPS trackers to customer transaction feeds—feed into centralized or distributed data platforms.

What sets these systems apart is not just data ingestion but the speed and accuracy with which insights are derived. As ChatGPT-4 notes, “live data enables systems to detect anomalies, update forecasts, and trigger automated responses in real time.” This immediacy is critical in high-stakes environments where delayed reactions can have tangible consequences.

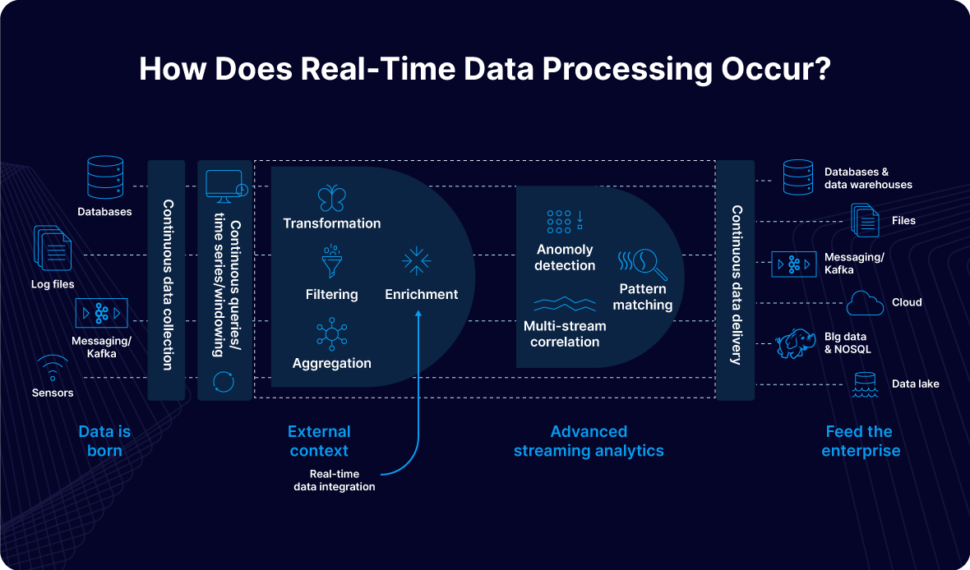

The Mechanics Behind Real-Time Data Processing

Behind every responsive system lies a layered architecture designed for continuous data flow.The first layer consists of data collection points—IoT devices, mobile apps, and enterprise software that generate streams of information. This raw data is then routed through stream processing engines, such as Apache Kafka, Flink, or AWS Kinesis, which filter, aggregate, and structure the input with minimal latency. These engines succeed by processing unbounded data streams in milliseconds rather than taking batched updates with hours of delay.

Low-latency pipelines

As a result, vehicles assume positions, healthcare monitors interpret vitals instantly, and financial platforms execute trades based on live market fluctuations, all within thousands of milliseconds.

Why Live Data Transforms Industries

The shift to live data utilization is reshaping industries in profound ways. In emergency services, dispatch centers leverage real-time GPS and incident feeds to deploy ambulances and fire crews faster, often reducing response times by over 30%.Utilities monitor grid performance with live sensors, detecting outages and rerouting power before blackouts escalate. In retail, dynamic recommendation engines adjust product suggestions based on instant customer behaviors, boosting conversion rates and customer loyalty. But perhaps most impactful is in financial technology, where live data drive algorithmic trading systems.

These platforms analyze streaming market data—stock prices, currency fluctuations, news feeds—to execute split-second buy or sell orders, capturing micro-moments of opportunity. A single millisecond delay can mean the difference between profit and loss in high-frequency trading. Beyond finance, industrial automation uses live sensor data to optimize manufacturing lines, adjusting machinery parameters in real time to prevent defects and maximize efficiency.

Security and Reliability: Guardrails for Live Data Systems

Integrating live data introduces significant challenges, especially concerning data integrity, security, and system uptime. Real-time systems must process vast volumes of information with strict consistency—every data point must be authentic, timely, and accurate. Cybersecurity threats, such as data spoofing or denial-of-service attacks, can compromise entire operations if safeguards are weak.Therefore, robust encryption, real-time anomaly detection, and redundant architectures form essential pillars. Data validation layersdistributed consensus protocols

Organizations increasingly adopt zero-trust security models, treating every data source as potentially compromised until verified. These measures protect not just data but the responsiveness these systems promise.

Challenges in Sustaining Live Data Momentum

Despite advances, sustaining live data pipelines demands continuous investment.High-throughput handling strains infrastructure, requiring scalable cloud services or on-premises edge clusters capable of managing terabytes per second. Latency bottlenecks may emerge as networks key or processing capacity becomes constrained. Moreover, mismatches between data format expectations and incoming streams frequently trigger bottlenecks, demanding agile integration tools and standardized APIs.

Maintaining interoperability across heterogeneous systems—legacy databases, modern microservices, IoT platforms—remains a persistent hurdle. Organizations must also balance real-time needs with long-term data retention for compliance, auditing, and future analytics. Yet, as processing power grows and connectivity improves, overcoming these barriers becomes increasingly feasible, enabling broader access to real-time intelligence across sectors.

ChatGPT-4’s Perspective on Live Data Maturity

When assessing whether systems “use live data,” ChatGPT-4 emphasizes: “it depends on real-time responsiveness, data freshness, and actionable output.” The model highlights that modern AI-driven platforms—from customer service chatbots to operational dashboards—rely fundamentally on streaming inputs to function effectively. “Without live data,” it explains, “AI cannot adapt dynamically, and decisions stall in outdated silos.” This insight underscores that live data is not merely a feature, but the lifeblood of intelligent, adaptive systems.As digital ecosystems converge and automation deepens, the capacity to harness live data is no longer optional—it’s a competitive imperative.

By embedding real-time analytics into every layer of infrastructure, organizations unlock agility, precision, and foresight that redefine operational excellence. The shift to live data marks a quiet revolution, transforming how systems think, react, and evolve in a fast-moving world. Stay ahead by understanding that the future belongs to those who don’t just collect data—but live it.

Related Post

Madolyn Smith Osborne: Pioneering Force in Modern Media and Advocacy

Mariska Hargitay Reveals the Unseen Strength Behind Her Stepmother’s Resilience

Will Cain’s Iconic Wife Photo Sparks Curiosity: The Portrait Behind the Political Name

Unraveling "Like Grah Keep": The Deeper Meaning Behind a Cultural Phrase